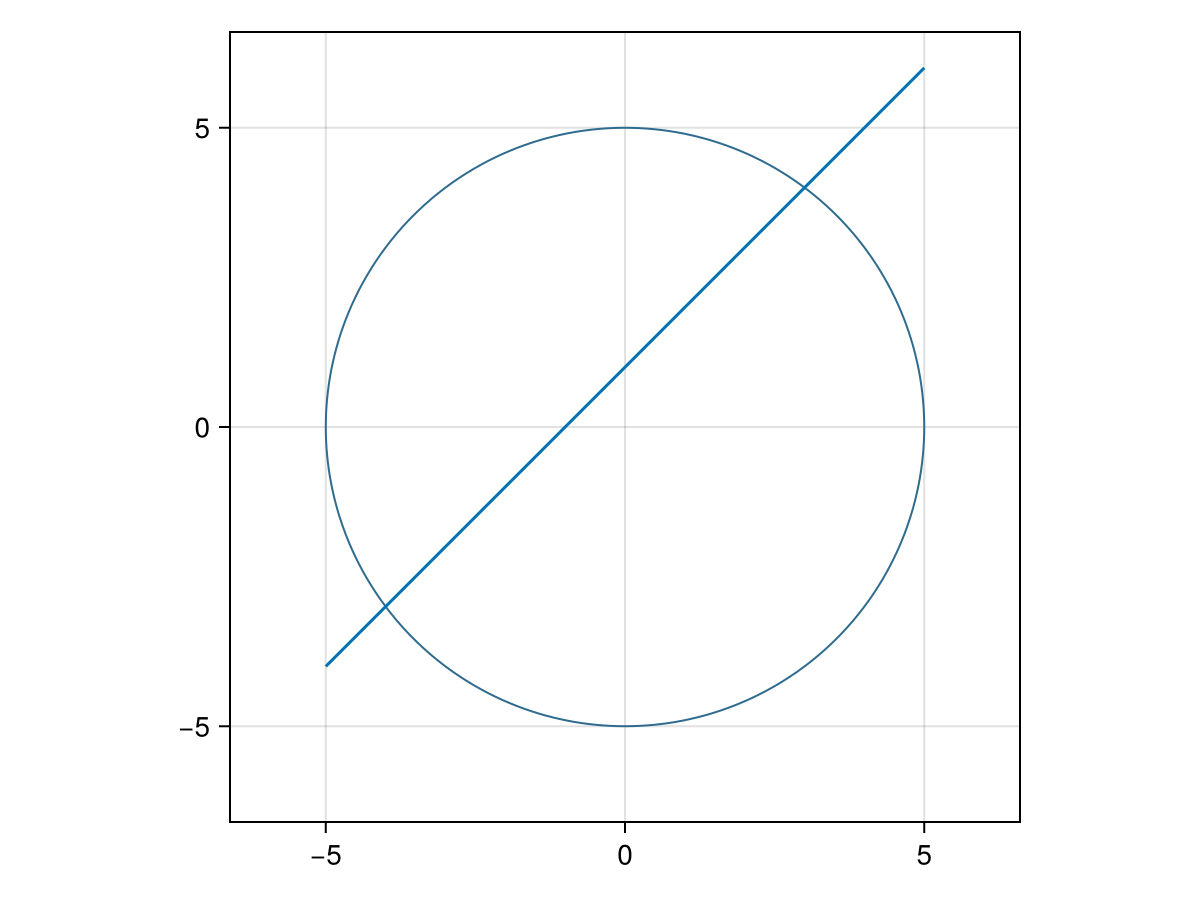

Let’s run Newton’s method on the function. To do this, we will build the function \(\vec{F}(\vec{x})\) using Julia notation. The equations \(y=x+1\) and \(x^2+y^2=25\) can be combined as the function

F(x::Vector{<:Real}) = [x[1]^2+x[2]^2-25, x[1]-x[2]+1]

where note that the argument

x has been declared as a Real vector and that the first argument is the circle and the second is the line. Notice that the variables \(x\) and \(y\) are encoded as x[1] and x[2]

2-element Vector{Float64}:

3.0000000730550047

4.000000073055005

which finds the point \((3,4)\) to within the default \(10^{-6}\text{.}\) If we start with the initial point

[-1,-1] the point \((-3,-4)\) is found.